The headline woman marries AI character made with ChatGPT has sparked global debate because it sits at the intersection of artificial intelligence, human psychology, and social norms. While the story itself emerged outside Pakistan, the questions it raises are not foreign to Pakistani society. As AI systems become more conversational, emotionally responsive, and integrated into daily life, relationships between humans and digital entities are no longer theoretical. They are happening, and they demand serious discussion rather than sensational reactions.

This case is not about technology alone. It touches personal autonomy, mental health, legal recognition, and how societies understand companionship in an era where machines can simulate empathy, memory, and dialogue.

What does “marrying an AI character” actually mean?

In legal and religious terms, a human cannot marry a non-human entity. The reported case does not involve a state-recognized marriage. Instead, it represents a symbolic or emotional commitment formed by the individual toward an AI character created through conversational AI tools.

These AI characters are typically built using:

- Large language models capable of sustained dialogue

- Memory systems that retain conversation history

- Personality customization based on user input

The emotional bond forms because the AI can respond consistently, attentively, and without social judgment. This creates a sense of companionship that feels stable to the user, even though the AI has no consciousness or legal identity.

Why stories like this attract attention

Human relationships with technology are not new. People have long formed emotional attachments to:

- Fictional characters

- Virtual pets

- Online avatars

- Game personas

What makes this case different is the depth of interaction. AI systems powered by advanced language models can:

- Respond in natural language

- Reflect emotional tone

- Adapt personality traits

- Simulate continuity in conversation

For some individuals, this creates a perceived relationship that feels reciprocal, even though it is technically one-sided.

Psychological dimensions of AI companionship

From a psychological perspective, AI companionship can fulfill certain emotional needs:

- Feeling heard without interruption

- Receiving consistent attention

- Avoiding social conflict or rejection

In societies where loneliness, social pressure, or emotional isolation exist, AI companionship can feel safe. However, mental health professionals generally caution that reliance on non-human relationships for emotional fulfillment may limit real-world social engagement.

In Pakistan, where family structures and community ties traditionally play a strong role, this raises questions about how younger generations process emotional connection in increasingly digital environments.

Cultural context: how Pakistani society may interpret such cases

Pakistani society places strong emphasis on:

- Family approval

- Social bonds

- Religious frameworks for marriage

An emotional commitment to an AI character would not be recognized socially or religiously. However, the underlying reasons behind such behavior—loneliness, social anxiety, or unmet emotional needs—are relevant within local contexts as well.

Ignoring these cases as “foreign oddities” risks missing deeper trends. Increased screen time, remote work, and digital communication have already changed how relationships form and function in Pakistan’s urban centers.

Legal reality: no recognition, no rights

From a legal standpoint, marriage requires two legal persons. An AI system:

- Has no legal identity

- Cannot hold rights or obligations

- Cannot consent in a legal sense

No jurisdiction, including Pakistan, recognizes marriage between a human and an AI. Any symbolic ceremony has no legal standing, inheritance implications, or marital rights.

This distinction is important because media headlines can blur the line between symbolic action and legal reality.

Ethical questions surrounding AI relationships

Ethical concerns arise not because of the individual’s choice, but because of system design and usage patterns.

Key ethical considerations include:

- Emotional dependency on non-sentient systems

- Lack of informed understanding of AI limitations

- Potential reinforcement of isolation

- Monetization of emotional engagement by AI platforms

Developers and platforms carry responsibility in clearly communicating what AI is and is not. An AI does not feel affection, loyalty, or commitment. It generates responses based on data patterns.

For authoritative understanding of how such AI systems function, OpenAI provides official documentation and explanations through https://www.openai.com/.

Technology vs perception: understanding ChatGPT’s role

ChatGPT and similar models operate by predicting language patterns. They do not possess:

- Consciousness

- Intentions

- Emotional awareness

The illusion of relationship emerges because humans naturally attribute meaning to responsive communication. When a system replies coherently and empathetically, the brain can interpret that as understanding.

This does not mean the technology is dangerous by default. It means users need clear digital literacy about how AI operates.

Media responsibility and narrative framing

Stories like woman marries AI character made with ChatGPT often spread rapidly due to shock value. Media framing matters because it shapes public understanding.

Responsible framing should:

- Clarify the symbolic nature of the action

- Avoid presenting it as a legal or social precedent

- Focus on mental health and digital ethics rather than ridicule

In Pakistan, where AI awareness is still developing, misinterpretation could lead to unnecessary fear or unrealistic expectations.

Parallels with other AI use cases in Pakistan

Pakistan already uses AI in practical domains:

- Customer support chatbots

- Banking and telecom services

- Education platforms

- Property data analysis

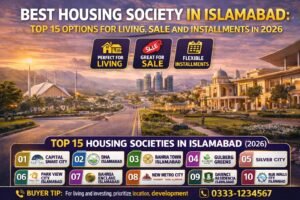

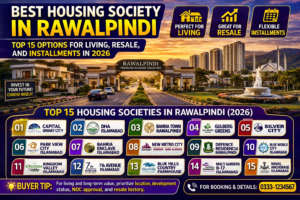

In real estate, for example, AI tools are used to structure information, not replace human judgment. Platforms such as Property AI apply AI to organize listings and data for Islamabad and Rawalpindi, supporting decision-making without substituting legal or emotional responsibility.

The difference between productive AI use and emotionally substitutive use lies in boundaries and intent.

Social isolation and digital substitutes

Urban life, economic pressure, and changing social norms have increased isolation globally. Digital substitutes often fill gaps where:

- Social trust feels fragile

- Emotional vulnerability feels risky

- Human interaction feels unpredictable

AI companionship does not cause isolation; it often appears where isolation already exists. Addressing the root causes matters more than reacting to the symptom.

Religious and moral considerations in Pakistan

Islamic teachings emphasize marriage as a social contract between two individuals with rights and responsibilities. An AI entity does not fit within this framework.

From a moral perspective, scholars are more likely to focus on:

- Mental well-being of the individual

- Avoidance of deception or delusion

- Encouragement of healthy human relationships

This places responsibility on families, educators, and policymakers to engage with digital realities thoughtfully rather than dismissively.

Regulation and future considerations

As AI becomes more personalized, policymakers may need to consider:

- Clear labeling of AI companions

- User education requirements

- Mental health safeguards

Regulation does not mean banning technology. It means setting expectations that protect users from misunderstanding AI capabilities.

Pakistan’s regulatory conversations around AI are still emerging, making public awareness especially important.

Separating novelty from long-term impact

One viral story does not define a future trend. However, it signals areas that require attention:

- Digital literacy

- Emotional health in digital spaces

- Ethical AI deployment

The long-term impact depends on how societies respond—not with panic, but with clarity.

What this means for ordinary users

For everyday users, the takeaway is simple:

- AI can support tasks, information, and organization

- AI should not replace real human relationships

- Understanding limitations protects against unrealistic expectations

Balanced engagement ensures technology remains a tool rather than a substitute for social connection.

FAQs

Is it legally possible to marry an AI character?

No. AI systems have no legal identity, and no country recognizes marriage between a human and an AI.

Does ChatGPT encourage emotional relationships?

No. ChatGPT generates text responses based on patterns and user input. Emotional attachment comes from human interpretation, not AI intent.

Is forming emotional attachment to AI harmful?

It depends on reliance level. Limited interaction is generally harmless, but replacing human relationships with AI companionship may raise mental health concerns.

Can AI understand love or commitment?

No. AI does not experience emotions or awareness. It simulates language that can appear emotionally responsive.

Why is this topic relevant to Pakistan?

As AI tools become common in daily life, understanding their limits helps prevent misuse, misinterpretation, and emotional dependency.

Disclaimer

This information is for awareness only and is subject to change. Readers should independently assess legal, ethical, and social implications and verify details from official and authoritative sources.